Why IoT Projects Fail at the Integration Layer: An Architect's Playbook for CIOs and CTOs

Introduction: The Trillion-Dollar Integration Gap

Global enterprise spending on the Internet of Things has crossed historic thresholds. Analyst forecasts across IDC, Gartner, and McKinsey converge on the same story: IoT is now a trillion-dollar category spanning manufacturing, retail, healthcare, utilities, logistics, and smart infrastructure. Boards have approved the budgets. Vendors have delivered the devices. Cloud platforms have matured to industrial scale.

And yet, a remarkably consistent finding appears in every major IoT study of the last five years: a large share of IoT initiatives — by some estimates well over half — either stall in pilot, fail to scale, or deliver a fraction of the business case that justified them in the first place.

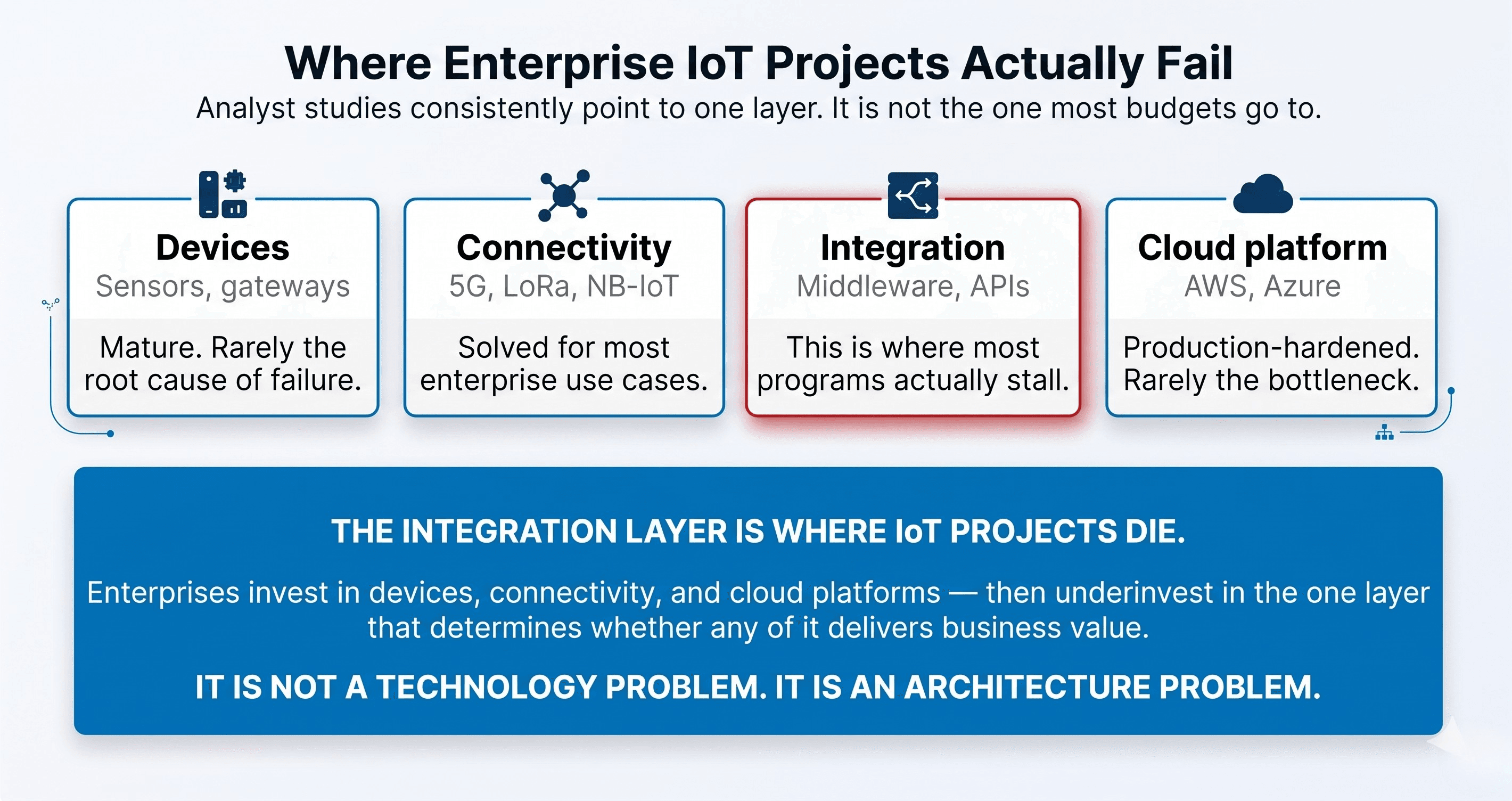

The failure pattern is almost never where executives expect to find it. It is rarely the sensors, the cloud platform, or the network. The failure happens in the middle — in the integration layer where IoT data meets the enterprise systems of record that actually run the business:

- The ERP

- The CRM

- The OMS and WMS

- The MES and CMMS

- The data warehouse

- The legacy mainframe that still processes financial close

- The on-premises Oracle database that the finance team refuses to touch

- The SAP ECC deployment that was customized beyond recognition a decade ago

This is the real IoT problem. And it is an architecture problem, not a technology problem.

This playbook is for the CIOs, CTOs, Chief Digital Officers, and enterprise architects who are accountable for IoT delivering actual business outcomes — not just dashboards. It walks through why integration fails, what architectural patterns actually work, what decisions leaders must personally own, and what a production-grade reference architecture looks like when you stop treating IoT as a standalone program and start treating it as an integration discipline.

Why CIOs and CTOs Are Rethinking IoT Strategy in 2026

The era of "connect everything and figure out the value later" is over.

For most of the last decade, enterprises approached IoT as a collection of disconnected initiatives: a predictive maintenance pilot in one plant, a smart shelf experiment in one store, a connected vehicle program in one logistics division. Each initiative produced its own dashboards, its own data silos, and its own integration workarounds. The pattern was tolerated because each pilot was small enough to operate outside the core enterprise architecture.

Three structural shifts have made that approach obsolete.

The First Shift: The Rise of AI-Driven Operations

Generative and agentic AI systems are no longer pattern-matching demonstrations — they are moving into operational decision loops across supply chain, customer service, inventory, and field operations. These systems are only as good as the real-time operational data feeding them. An AI agent deciding whether to reroute a shipment, expedite a replenishment, or trigger a field-service dispatch needs IoT telemetry integrated into the same context as ERP inventory, CRM customer data, and OMS order state. Disconnected IoT data is effectively invisible to AI.

The Second Shift: The Collapse of the Batch-Processing Paradigm

Enterprise systems were historically designed around nightly batch jobs. IoT is inherently streaming. When a temperature excursion in a pharmaceutical cold chain triggers a compliance event, the ERP cannot learn about it at 2 a.m. the next morning. When a production line sensor detects a quality drift, the MES and the procurement system need to know within seconds, not shifts. The integration architecture must reconcile event-driven data with transaction-driven systems of record — and most legacy architectures simply cannot.

The Third Shift: The Economics of Edge Computing

Processing at the edge is no longer exotic. Industrial gateways now run containers, ML models, and local rule engines. This changes the integration question from how do we get all the data to the cloud to what decisions happen where, what data flows where, and how do we keep the enterprise systems of record coherent across all of it. That is an architecture problem that only a CIO or CTO can own.

The Five Root Causes of IoT Integration Failure

When IoT programs underperform, the postmortem almost always converges on the same five failure patterns. They are not independent — they compound each other — but each deserves explicit attention.

1. Protocol and Data Model Fragmentation

IoT devices do not speak one language. Industrial equipment communicates over OPC-UA, Modbus, PROFINET, EtherNet/IP, and BACnet. Commercial and consumer devices favor MQTT, CoAP, HTTP/HTTPS, and WebSocket-based protocols. Enterprise systems, meanwhile, expect SOAP, REST, OData, SAP IDocs, EDI, or proprietary ERP-native connectors.

The semantic gap between a PLC emitting a binary-encoded register value and an ERP expecting a structured purchase order confirmation is enormous. Most failed IoT projects underestimated this gap. "Connecting" a device actually means translating protocols, normalizing data models, reconciling units of measure, aligning timestamps across clock-drifted devices, and mapping device-native identifiers to enterprise master data. None of that happens by accident.

2. Legacy Systems Were Never Designed for Event-Driven Workloads

ERPs, WMSs, MESs, and most systems of record were architected for human-initiated or scheduled transactions. IoT inverts this assumption. A single mid-sized factory can generate millions of telemetry events per day. A connected fleet of a few thousand vehicles produces tens of millions.

Attempting to push raw IoT data directly into transactional systems is not merely inefficient — it is architecturally destructive. It breaks database performance, corrupts audit trails, triggers licensing cost explosions, and in some cases violates the system's supported integration patterns entirely.

3. Absent Middleware and Integration Strategy

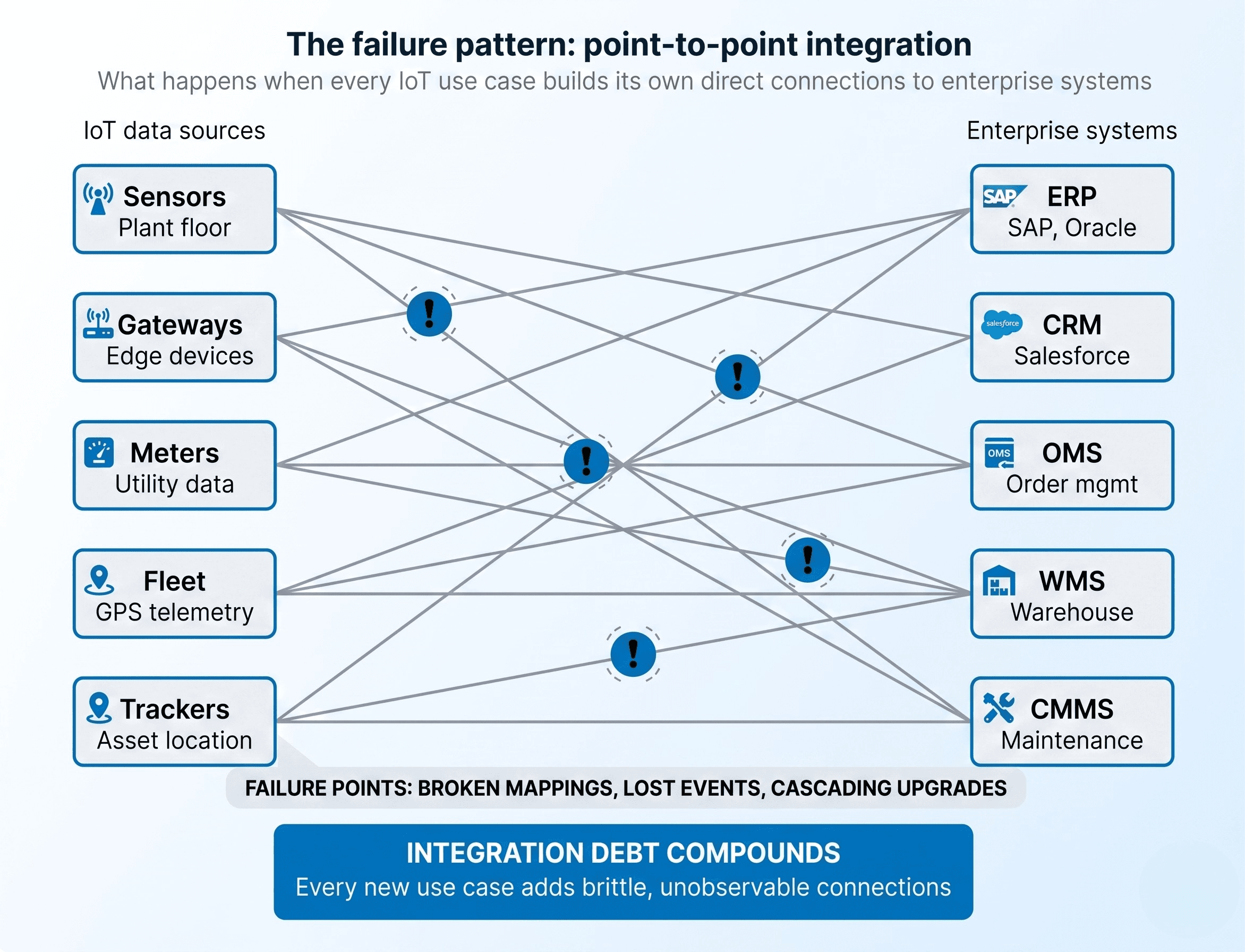

The most common architectural failure in IoT programs is the absence of a deliberate middleware layer. Instead, integration happens through accumulated point-to-point connections: a Lambda function that reads from IoT Core and writes to the ERP; a batch script that exports sensor data nightly; a custom webhook that pushes alerts from one system to another.

Each connection works in isolation. Collectively, they form an unobservable, unmaintainable mesh. This is integration debt — and it compounds faster than most other forms of technical debt because IoT data volumes grow exponentially while integration code grows linearly.

4. Data Governance and Master Data Misalignment

IoT devices have their own identifiers — MAC addresses, device IDs, serial numbers, UUIDs. Enterprise systems have their own — asset IDs, equipment numbers, material numbers, customer IDs, location codes. The unglamorous but decisive question is: how does a telemetry event from device MQTT-GW-4471 get correlated to asset EQ-000238341 in the CMMS and location PLANT-07/LINE-03/STATION-B in the MES?

Most IoT programs defer this question. The result is a data lake full of telemetry that cannot be joined to operational context — which means it cannot support AI models, root cause analysis, or automated workflows. Master data alignment is not a data engineering task. It is a governance discipline that must be established before the integration layer is built.

5. Security, Identity, and Compliance Blind Spots

IoT dramatically expands the enterprise attack surface. Every connected device is an identity that can be impersonated, a credential that can be stolen, and a network endpoint that can be pivoted from. The integration layer is where this risk concentrates — where device-originated data crosses into privileged enterprise systems.

Failed IoT programs routinely discover — often during an audit, occasionally during an incident — that devices were provisioned with shared credentials, certificates were never rotated, and the integration platform accepts inbound telemetry without strong cryptographic identity validation. If the integration layer is not designed around zero-trust principles from day one, retrofitting them after deployment is painful, expensive, and often incomplete.

The Hidden Architectural Debt Behind Most IoT Failures

Beneath the five symptoms above lies a deeper pattern: most enterprises attempt IoT integration by extending architectures that were never meant to carry it. There are four recurring forms of this debt.

Tight coupling. A Lambda function directly calls an ERP API. A device-management platform is hard-wired to a specific CRM instance. A middleware job hardcodes field mappings between a sensor payload and an OMS schema. Any change on either side cascades into a chain of brittle failures.

Synchronous-only legacy APIs. Many enterprise systems expose only request-response interfaces. When an IoT integration needs to deliver a burst of events, there is no native buffering or retry mechanism. Integration engineers end up building ad-hoc queues, retry logic, and dead-letter handling — none of which is observable or governable.

Absence of an event backbone. Without a durable, replayable event stream, every integration becomes a fire-and-forget pipeline. Lost events are lost forever. New consumers cannot be added without rebuilding upstream plumbing. Event-driven architecture is not a luxury for IoT at enterprise scale — it is load-bearing infrastructure.

"We'll integrate it properly later." Every IoT proof of concept that gets rushed into production without a real integration strategy becomes, within twelve to eighteen months, the legacy system that the next project has to work around. The debt is intergenerational.

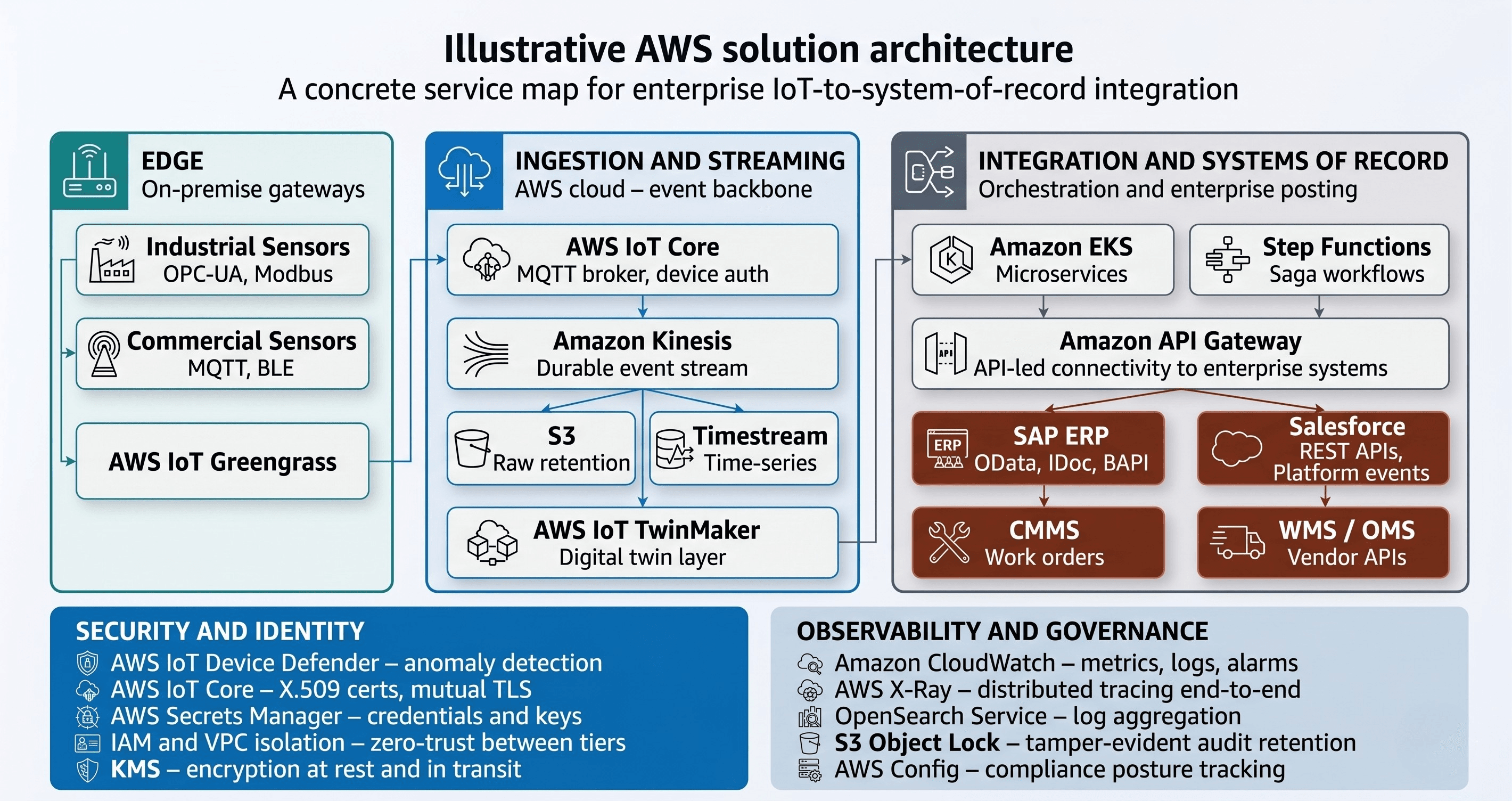

The Integration Architecture Playbook: A Reference Model

What follows is a layered reference architecture that has proven itself across enterprise IoT deployments. It is deliberately platform-aware — with specific references to AWS IoT Core and Azure IoT Hub — but the pattern is portable across cloud providers and hybrid environments.

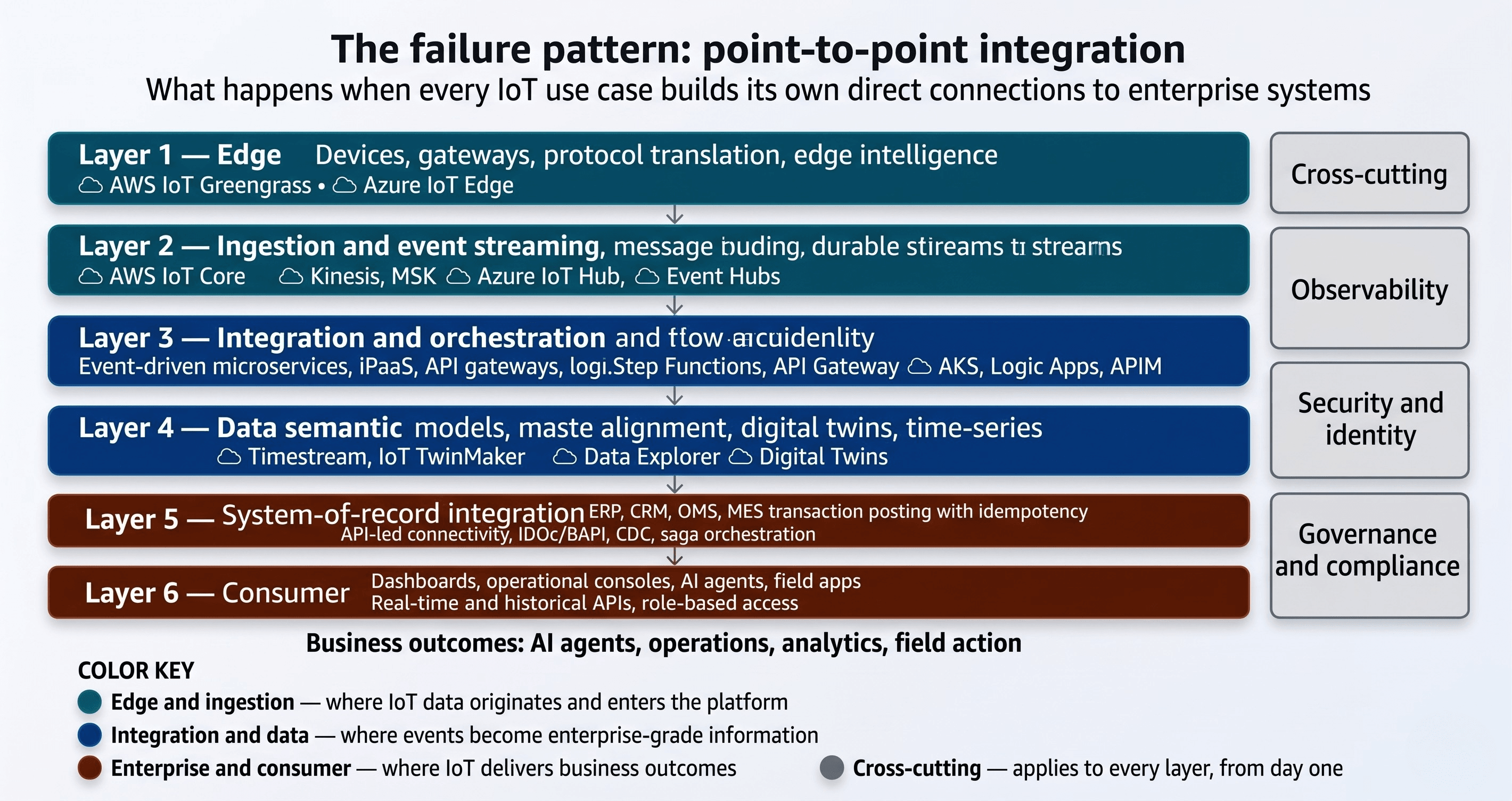

Think of the architecture as six horizontal layers, with three cross-cutting concerns that span all of them.

Layer 1: The Edge Layer

This is where physical devices, machines, and sensors emit data. It includes the devices themselves and the edge gateways that aggregate, translate, and often pre-process data before it leaves the site.

Responsibilities:

- Protocol translation from industrial protocols (OPC-UA, Modbus, PROFINET) and commercial protocols (BLE, Zigbee) into modern streaming protocols (MQTT, HTTPS, AMQP)

- Local buffering for intermittent connectivity — critical in remote sites, vehicles, or plants with unreliable WAN links

- Edge intelligence for latency-sensitive decisions: anomaly detection, filtering, local rule execution, on-device ML inference

- Secure device identity and mutual TLS-based authentication with the cloud platform

AWS implementation: AWS IoT Greengrass runs on edge gateways and provides local Lambda execution, local MQTT brokering, machine learning inference, and secure connection to AWS IoT Core.

Azure implementation: Azure IoT Edge provides an equivalent capability, running containerized modules on edge devices and connecting to Azure IoT Hub.

Layer 2: The Ingestion and Event Streaming Layer

This is the first cloud-side layer — the entry point where device telemetry arrives, is authenticated, and is routed into the enterprise data fabric.

Responsibilities:

- Secure device connection at scale — hundreds of thousands to millions of concurrent device sessions

- Message ingestion with strong identity verification (X.509 certificates, IAM-signed connections)

- Routing to downstream processors based on topic, payload content, or device metadata

- Persistence of raw events in a replayable, durable stream for downstream consumers

AWS implementation: AWS IoT Core serves as the MQTT broker and device gateway. Events are forwarded to Amazon Kinesis Data Streams or Amazon MSK. Raw data lands in Amazon S3 for long-term retention.

Azure implementation: Azure IoT Hub handles device ingestion. Azure Event Hubs or Azure Event Grid handles downstream event streaming. Azure Data Lake Storage provides durable raw-event persistence.

The critical architectural decision at this layer: treat the event stream as the system of record for raw telemetry. Every downstream system consumes from the stream, not from point-to-point pipes. This is the single most important architectural pattern in enterprise IoT.

Layer 3: The Integration and Orchestration Layer

This is where IoT data meets enterprise systems. It is the layer where most failures happen — and the layer that most IoT programs underinvest in.

Responsibilities:

- Consuming events from the streaming layer and translating them into the protocols, schemas, and transaction patterns that enterprise systems expect

- Orchestrating multi-step business processes that span IoT events and enterprise systems

- Managing API contracts, versioning, and backward compatibility between IoT-side and enterprise-side systems

- Handling asynchronous patterns, retries, dead-letter queues, and idempotency

Implementation approaches:

- Event-driven microservices on container platforms (ECS, EKS, AKS, Azure Container Apps)

- iPaaS platforms (MuleSoft, Boomi, Informatica, Azure Logic Apps, AWS Step Functions with EventBridge)

- API management platforms (Amazon API Gateway, Azure API Management, Kong, Apigee)

- Custom middleware reserved for genuinely unique, ultra-low-latency requirements

Layer 4: The Data and Semantic Layer

This is where raw events become meaningful, queryable, enterprise-grade data.

Implementation approaches:

- Time-series databases for telemetry: Amazon Timestream, Azure Data Explorer, InfluxDB

- Data lakes and lakehouses for analytical workloads: S3 with Athena or Redshift Spectrum, Azure Data Lake with Synapse

- Master data management platforms for identifier reconciliation: Informatica, Reltio, or custom graph-database-backed services

- Digital twin services for contextualized operational state: AWS IoT TwinMaker, Azure Digital Twins

The digital twin pattern deserves particular attention. A well-implemented digital twin provides a single, semantic representation of a physical asset that abstracts away device-level complexity and exposes a clean, queryable interface to enterprise systems.

Layer 5: The System-of-Record Integration Layer

This is where the architecture actually delivers business outcomes — by updating ERPs, CRMs, OMSs, WMSs, MESs, and other enterprise systems with IoT-derived intelligence.

Common integration patterns at this layer:

- API-based integration for modern ERP and CRM systems (S/4HANA, Dynamics 365, Salesforce, Oracle Fusion)

- IDoc, BAPI, or RFC-based integration for older SAP deployments

- File-based or EDI integration for systems that genuinely cannot support real-time APIs

- Change Data Capture (CDC) for bidirectional sync scenarios where enterprise state must flow back to the IoT platform

The discipline at this layer is restraint. Not every IoT event deserves a system-of-record transaction. The integration layer's job is to decide what matters, aggregate appropriately, and post only the transactions that have clear business meaning.

Layer 6: The Consumer Layer

This is where humans and AI systems actually use the data: dashboards, operational consoles, mobile apps, AI agents, analytics workbenches, and field-operations tools. This layer is intentionally the thinnest in the playbook — as long as the layers beneath it are built correctly.

Cross-Cutting Concern 1: Observability

Every layer must emit structured logs, metrics, and traces. End-to-end observability — tracing a single sensor event from edge to system of record — is essential for debugging, SLA management, and regulatory audit. Platforms like Amazon CloudWatch, Azure Monitor, Datadog, New Relic, and OpenTelemetry-based stacks are the foundation.

Cross-Cutting Concern 2: Security and Identity

Zero-trust from edge to enterprise. Strong device identity via X.509 certificates managed through a proper PKI. Secrets management through AWS Secrets Manager, Azure Key Vault, or HashiCorp Vault. Network segmentation between OT and IT domains. Principle of least privilege on every API, service, and identity.

Cross-Cutting Concern 3: Governance and Compliance

Data residency rules, retention policies, audit trails, and alignment with relevant regulatory frameworks — HIPAA for healthcare telemetry, NERC-CIP for utilities, ZATCA and UAE eInvoicing mandates where IoT triggers financial transactions, and sector-specific frameworks globally. Governance is not a separate project. It is designed into the integration layer.

Integration Patterns That Actually Work

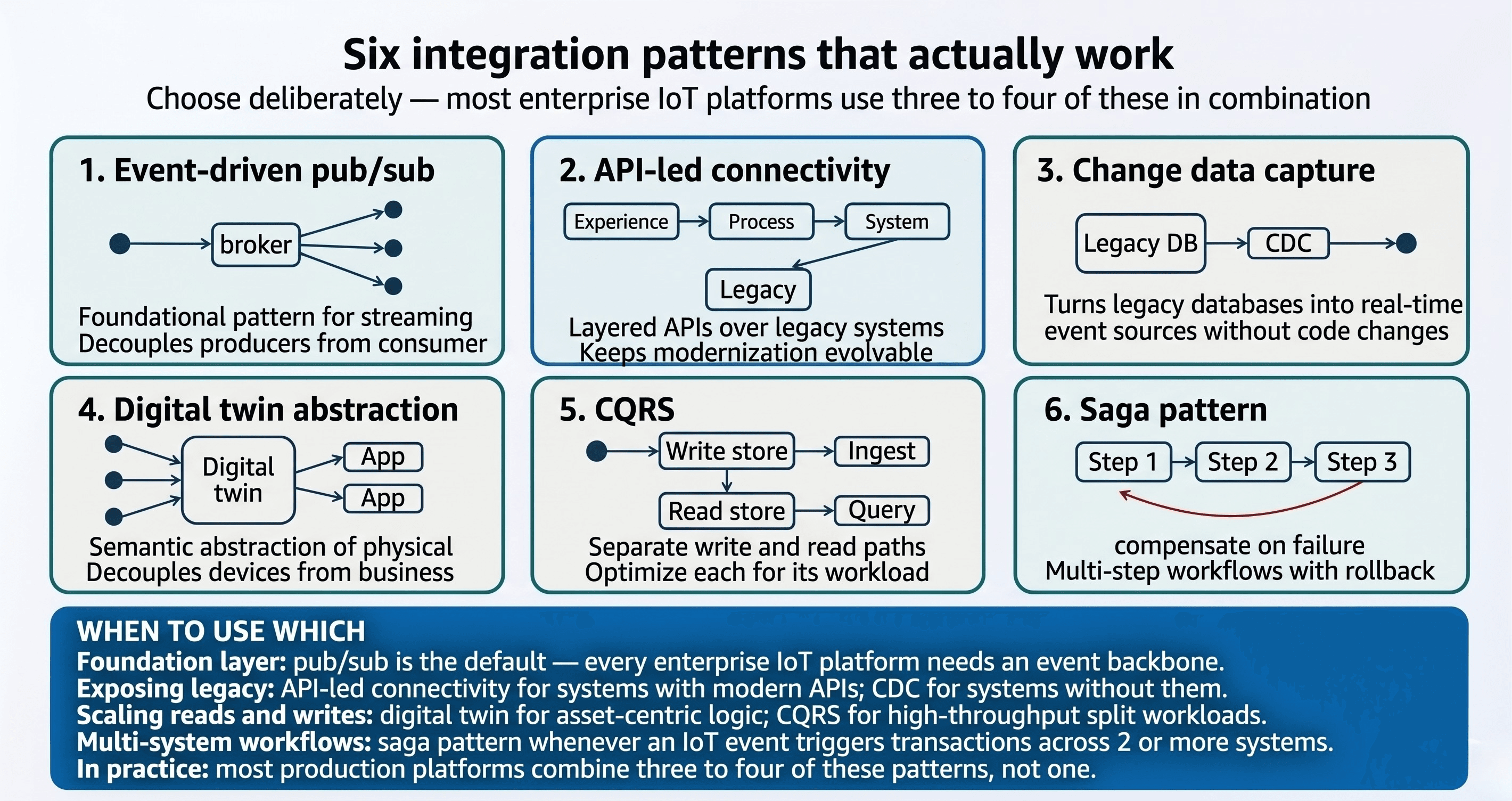

Event-Driven Integration (Publish/Subscribe)

The foundational pattern. Devices publish events to topics. Multiple consumers subscribe independently. Producers and consumers are decoupled — neither needs to know about the other's existence, scaling characteristics, or availability.

When to use: Almost always, as the primary pattern at the streaming layer.

Why it works: New consumers can be added without modifying producers. The pattern naturally supports high fan-out, which is the default condition in enterprise IoT.

API-Led Connectivity

A layered API strategy — system APIs that expose raw capabilities, process APIs that orchestrate business logic, and experience APIs that serve specific consumer needs.

When to use: As the standard pattern for exposing legacy enterprise systems to the integration layer.

Why it works: A system API over an aging ERP can be re-implemented when the ERP is modernized, without disturbing the dozens of process and experience APIs built on top of it.

Change Data Capture (CDC)

Streaming database change events from enterprise systems into the IoT integration layer, so that IoT-side processes can react to enterprise state changes in near-real-time.

When to use: When the IoT platform needs to know about enterprise events — a work order status change, a master data update, a customer record change — without polling.

Why it works: CDC tools (Debezium, AWS DMS, Azure Data Factory with change feed) turn legacy databases into event sources without modifying the legacy application.

Digital Twin as an Integration Abstraction

Modeling each physical asset as a digital twin with a defined property set, behaviors, and relationships. Integration logic interacts with the twin, not with raw device telemetry.

When to use: Whenever multiple downstream systems need a consistent, semantic view of an asset.

Why it works: The twin absorbs device-level complexity. Everything above the twin — integration services, dashboards, AI models — interacts with a clean, stable abstraction.

CQRS (Command Query Responsibility Segregation)

Separating the write path (commands that change state) from the read path (queries that retrieve state), with different data stores optimized for each.

When to use: High-throughput IoT scenarios where read patterns (dashboards, APIs, analytics) differ significantly from write patterns (raw telemetry ingestion).

Saga Pattern for Distributed Transactions

Coordinating multi-step processes that span IoT events and multiple enterprise systems, with explicit compensation logic for failures.

When to use: Any IoT-triggered workflow that touches multiple systems of record — for example, a quality-event triggering a hold in the MES, a customer notification in the CRM, and a credit memo in the ERP.

Why it works: IoT-to-enterprise workflows cannot rely on traditional two-phase commit across heterogeneous systems. The saga pattern makes failure handling explicit and recoverable.

Why Middleware Architecture Is Non-Negotiable

The instinct for many teams is to wire up a direct call from the IoT platform to enterprise systems. In development environments, this seems clean. In production, it breaks down quickly.

The Make-or-Break Decisions CIOs and CTOs Must Own

Certain architectural decisions are too consequential to delegate. They determine whether the IoT program remains strategically coherent or fragments into dozens of locally-optimized, globally-incompatible implementations.

- Build versus buy on middleware. iPaaS platforms accelerate delivery and reduce maintenance burden, at the cost of licensing expense and some flexibility. Custom-built event-driven microservices offer maximum flexibility at the cost of engineering capacity and operational overhead. The right answer is almost never pure — it is a deliberate mix, framed at enterprise-architecture level, not per-project.

- Cloud, edge, or hybrid processing. Latency requirements, data residency rules, and bandwidth economics determine where each computation should happen. Inconsistent edge-versus-cloud choices across programs create operational chaos.

- Canonical data model ownership. Who owns the enterprise canonical model for assets, devices, locations, and events? If no single owner exists, the model will fragment. This is one of the most under-appreciated CIO decisions in IoT programs.

- Cloud provider strategy. Single-cloud, multi-cloud, or cloud-plus-edge-on-prem? Each option has dramatically different implications for skills, operating model, vendor lock-in, and cost.

- Data residency and sovereignty. For enterprises operating across multiple regulatory regimes — GCC, EU, US, APAC — data residency is a core architectural constraint, not a compliance checkbox.

- Long-term operating model. Who runs the integration platform in production? An internal platform team? A managed service provider? A blended model? Deferring this decision until after go-live is one of the most common and most damaging failure patterns.

Governance, Security, and Compliance: The Non-Negotiables

A production IoT integration platform must be designed around a specific set of non-negotiables. These are not aspirations — they are the minimum viable posture.

- Strong cryptographic device identity. Every device carries a unique X.509 certificate, provisioned through a proper PKI, rotated on a defined schedule, and revocable through a managed process.

- Certificate and key lifecycle management. Provisioning, rotation, revocation, and offboarding must be automated. AWS IoT Core's fleet provisioning and Azure IoT Hub's Device Provisioning Service provide managed capabilities.

- Zero-trust across OT and IT boundaries. Every request — from devices, services, or humans — is authenticated and authorized at the point of use, regardless of network location.

- End-to-end encryption. TLS 1.2 or higher in transit; strong encryption at rest with managed key services; field-level encryption where sensitive telemetry is involved.

- Data sovereignty and residency controls. Explicit rules about which regions and jurisdictions can host which data, enforced at platform level — including UAE data protection frameworks, Saudi Arabia's NCA controls, and NESA for critical infrastructure.

- Audit and traceability. Every event, transaction, and configuration change is logged in a tamper-evident store.

- Continuous security monitoring. Anomalous device behavior must be detected automatically. AWS IoT Device Defender and Azure Defender for IoT provide this capability.

The Operating Model: Who Runs IoT Integration After Go-Live?

Most IoT program failures that are attributed to "architecture" are actually Day 2 operations failures. The architecture worked in the pilot. What collapsed was the ability to operate it, evolve it, and absorb new use cases at scale.

Regardless of model, certain capabilities must exist: 24×7 platform monitoring and incident response; a defined release management process; ongoing security operations; continuous integration delivery for new use cases; and vendor and partner management.

The operating model conversation must happen before go-live. Ideally, it happens before architecture selection — because operating model constraints should inform architectural choices, not the other way around.

What "Done Right" Looks Like: An Illustrative Enterprise Scenario

Consider a multi-site enterprise with operations across several regions — a mix of manufacturing plants, distribution centers, and customer-facing service locations. The business wants IoT-driven visibility and automation across equipment health, environmental monitoring, asset tracking, and operational safety. Existing investments include a customized SAP ERP, Salesforce CRM, an OMS, a WMS, and a CMMS. The company operates under multiple regulatory regimes.

The wrong approach is to run each use case as a standalone project, each with its own cloud footprint, its own integration code, and its own operating model. The right approach looks like this:

A single enterprise IoT platform is established on AWS, with AWS IoT Core as the ingestion backbone. Edge gateways running AWS IoT Greengrass are deployed to every plant and distribution center. Industrial sensors speak OPC-UA and Modbus to the gateways; commercial sensors use MQTT directly. The gateways handle protocol translation, local buffering during WAN outages, and on-edge anomaly detection for latency-sensitive safety scenarios.

AWS IoT Core ingests events, authenticates them using certificates provisioned through a managed PKI, and routes them into Amazon Kinesis Data Streams. Raw events persist to Amazon S3. A set of containerized microservices running on Amazon EKS consume from the stream and apply domain-specific integration logic.

A digital twin representation — implemented using AWS IoT TwinMaker — provides the semantic bridge between device-level telemetry and enterprise asset hierarchies. SAP integration uses OData APIs for modern modules and IDoc-based integration for older areas. Salesforce integration is orchestrated through AWS Step Functions. Security is designed in throughout: certificates auto-rotated, encryption end-to-end, AWS IoT Device Defender active, centralized logging to Amazon OpenSearch with tamper-evident audit retention in S3.

New IoT use cases — a connected quality inspection system, an energy-monitoring program, an environmental-compliance telemetry flow — are delivered as incremental additions to the platform rather than standalone projects. Each new use case inherits the platform's ingestion, security, observability, and operating model. The marginal cost of adding a use case is a fraction of what it would be under a project-by-project approach.

An equivalent architecture on Azure would substitute Azure IoT Hub, Azure IoT Edge, Azure Event Hubs, Azure Digital Twins, Azure Kubernetes Service, Azure API Management, Azure Logic Apps, and Azure Key Vault — with the same layered model, the same patterns, and the same governance principles. The platform is different; the playbook is the same.

Enterprise IoT Integration Readiness Checklist

Before going live with a production IoT integration, verify the following:

- Event backbone established — durable, replayable stream as the system of record for telemetry

- Middleware abstraction layer separating IoT event processing from enterprise system calls

- Master data alignment completed — device identifiers mapped to enterprise asset, location, and product IDs

- Canonical data model defined and owned by a named governance authority

- Zero-trust security architecture — device identity, certificate lifecycle, and OT/IT segmentation in place

- End-to-end observability — logs, metrics, and traces from edge to system of record

- Retry logic and dead-letter queues for failed integration submissions

- Data residency and sovereignty controls enforced at platform level

- Operating model defined — Day 2 ownership, incident response, and release management agreed before go-live

- New use case onboarding process documented — so future programs extend the platform, not bypass it

How Techies Infotech Approaches IoT and Legacy Integration

Techies Infotech builds and operates the integration layer that most IoT programs underinvest in. Our work sits at the intersection of enterprise commerce, ERP integration, cloud infrastructure, and digital transformation — which is where IoT-to-enterprise integration actually lives.

- Enterprise integration depth. We have delivered integrations across ERP, CRM, OMS, WMS, and commerce platforms for enterprises operating at scale. The patterns that work for commerce-to-ERP integration — event-driven architecture, API-led connectivity, data contracts, governance discipline — are the same patterns that work for IoT-to-ERP integration.

- AWS IoT Core specialization. We have designed, built, and operated AWS-based IoT and integration platforms, with hands-on depth in AWS IoT Core, AWS IoT Greengrass, Amazon Kinesis, Amazon EKS, Amazon API Gateway, AWS Step Functions, and the broader AWS integration stack. We extend the same capability to Azure IoT Hub and the Azure integration ecosystem.

- Regulated-integration experience. Our work on ZATCA integration, UAE eInvoicing, and Peppol-based compliance flows has given us a deep operating discipline around regulated, auditable, financially-material integration — precisely the discipline that serious IoT integration requires.

- Long-term managed services. We do not treat integration as a project that ends at go-live. Our managed services model covers ongoing operations, incident response, platform evolution, and continuous integration delivery under defined service-level commitments.

- Enterprise-grade delivery posture. Architecture review rigor, security-by-design, governance integration, and outcome accountability. We build systems that survive audits, scale with the business, and do not become the next generation's legacy debt.

Frequently Asked Questions

Q1

What is the biggest reason IoT integration projects fail?

The largest single reason is the absence of a deliberate integration and middleware strategy. Enterprises invest in devices, connectivity, and cloud platforms but treat integration to systems of record as a tactical activity. The result is a mesh of point-to-point connections that cannot scale, cannot be observed, and cannot absorb new use cases without expensive rework.

Q2

Should legacy ERPs be integrated with IoT directly or through middleware?

Through middleware, in nearly every case. Direct integration tightly couples the ERP to IoT data volumes and event patterns it was never designed to handle, creates performance and licensing risks, and produces brittle integrations that break with any change on either side. A dedicated integration layer — event-driven microservices, an iPaaS platform, or a combination — absorbs the impedance mismatch and keeps both sides evolvable.

Q3

What is the role of event streaming in IoT integration?

Event streaming is the backbone of enterprise IoT integration. A durable, replayable event stream — implemented with Apache Kafka, Amazon Kinesis, AWS IoT Core with MSK, or Azure Event Hubs — decouples producers from consumers, supports unlimited fan-out, preserves events during consumer outages, and enables new use cases to be added without modifying upstream systems. Without an event backbone, IoT integration does not scale.

Q4

How does digital twin architecture help with legacy integration?

A digital twin provides a clean, semantic abstraction of a physical asset. Integration services interact with the twin rather than with raw device telemetry, which absorbs device-level complexity, normalizes multi-sensor data, and presents a stable interface to enterprise systems. This dramatically reduces the coupling between IoT device changes and integration logic, making both sides independently evolvable.

Q5

What are the security risks of IoT-to-legacy integration?

The primary risks are device impersonation, credential compromise, lateral movement from IoT devices into privileged enterprise networks, and data exfiltration through integration channels. Mitigation requires zero-trust architecture, strong cryptographic device identity, proper certificate lifecycle management, segmentation between OT and IT domains, and continuous monitoring for anomalous device behavior. These controls must be designed in from day one — retrofitting them is painful and often incomplete.

Q6

When should enterprises use iPaaS versus custom middleware for IoT?

iPaaS is the right choice for well-bounded orchestration, standardized enterprise integrations, and scenarios where delivery speed and maintenance burden matter more than absolute flexibility. Custom middleware — typically event-driven microservices on a container platform — is appropriate for high-throughput, latency-sensitive, or domain-specific logic. Most enterprise IoT platforms use both: iPaaS for the majority of flows, microservices for the high-volume and high-specificity paths.

Q7

What is the difference between AWS IoT Core and Azure IoT Hub?

Both are managed cloud services for secure, scalable device connectivity and ingestion. AWS IoT Core is tightly integrated with the broader AWS ecosystem and is particularly strong in serverless-first architectures and edge scenarios through AWS IoT Greengrass. Azure IoT Hub is tightly integrated with the Azure ecosystem and is particularly strong in enterprises already standardized on Microsoft platforms. The architectural patterns — event streaming, integration layering, digital twins, zero-trust security — apply equivalently on both platforms.

Q8

How long does a production-grade IoT integration platform take to build?

A foundational platform — ingestion, streaming, one or two end-to-end integrations, core security and observability — can typically be delivered in a few months of focused work. A mature, enterprise-wide platform supporting multiple use cases, multiple regions, and a defined operating model is usually a twelve-to-eighteen-month program. The critical discipline is to design for the eventual mature state from the start, even when initial delivery is scoped narrowly.

Q9

Can IoT be integrated with legacy systems that do not have modern APIs?

Yes, though the integration approach differs. For systems with only file-based or batch interfaces, IoT data is aggregated and delivered through scheduled exports. For systems with database-level access, Change Data Capture tooling (Debezium, AWS DMS, Azure Data Factory) can expose state changes as an event stream. For systems with proprietary protocols — older SAP, mainframe applications — specialist connectors and adapter patterns exist for most common platforms. The common principle is to build an abstraction layer between the legacy system and the IoT integration platform.

Closing Perspective: Integration Is the Real IoT Strategy

For a decade, enterprise IoT has been described as a device-and-data problem. It is not. It is an integration problem.

The winning IoT strategy for the next decade will not be defined by how many sensors were deployed or how much data was captured. It will be defined by how coherently IoT telemetry is integrated into the enterprise systems that actually run the business — and how quickly the resulting architecture can absorb new use cases, new devices, new regulations, and new AI capabilities without collapsing under its own weight.

The CIOs and CTOs who will be judged well on their IoT investments in five years are the ones making the unglamorous architectural decisions today: investing in an event backbone, establishing canonical data models, designing for zero-trust from day one, choosing an operating model before go-live, and refusing to let individual projects fragment the enterprise architecture in the name of speed.

Integration is not the layer beneath the IoT strategy. Integration is the IoT strategy. Everything else is just plumbing.

Ready to Pressure-Test Your IoT Integration Architecture?

Techies Infotech partners with enterprise CIOs, CTOs, and digital transformation leaders to design, build, and operate integration platforms that deliver IoT ROI at scale. If you are scoping a new IoT program, rescuing one that has stalled, or modernizing an architecture that is no longer keeping up with the business, we offer structured architecture reviews and integration readiness assessments grounded in the playbook above.

Reach out to start a conversation about what integration-led IoT looks like for your organization.